Designed to Distract

The hidden architecture of attention theft, and the studios fighting back

In 2009, a UX designer at a small startup built infinite scroll in an afternoon. The feature removed the pagination that had governed web browsing since the mid-nineties, replacing it with a feed that simply never ended. It was elegant, technically straightforward, and almost immediately adopted by every major social platform on earth.

Aza Raskin, that designer, later estimated the cost: roughly 200,000 hours of collective human attention lost per day to a mechanism he built without much thought.

The story is a useful lens. Infinite scroll was not cynically designed to addict anyone. It solved a real UX problem. But it also removed the only natural stopping point in the browsing experience, and platforms quickly discovered that a feed without an end is a feed that holds attention longer.

Good design intent and extractive outcomes are not mutually exclusive. That tension sits at the centre of this article.

The Architecture of Stickiness

Much of the intellectual framework underpinning modern product design traces back to Stanford's Persuasive Technology Lab and the work of BJ Fogg, who spent the late 1990s and early 2000s cataloguing the ways digital systems could change human behaviour.

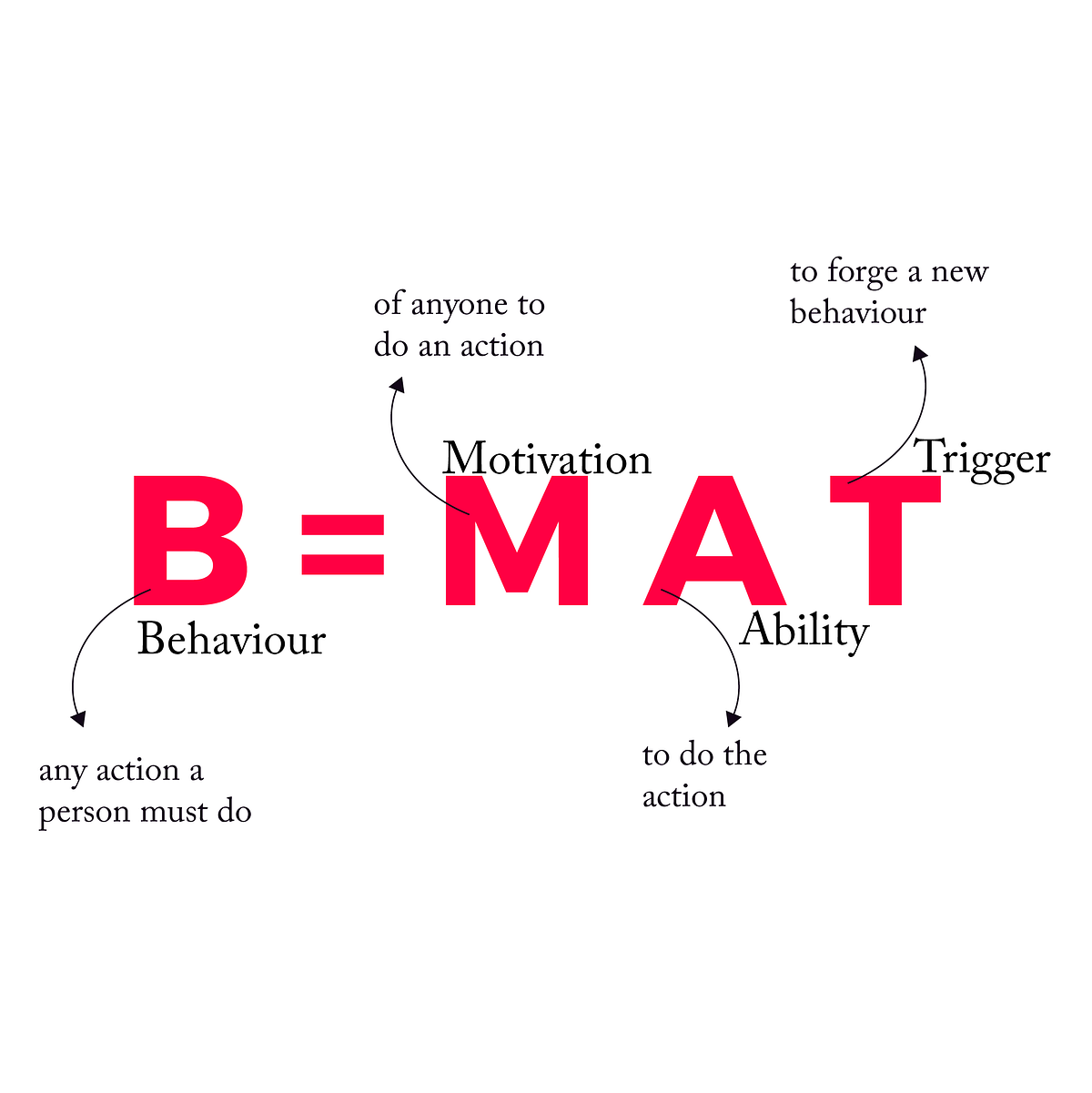

His Fogg Behaviour Model — behaviour happens when motivation, ability, and a trigger converge at the same moment — became foundational reading for a generation of product teams.¹

Fogg, B.J. (2003). Persuasive Technology: Using Computers to Change What We Think and Do. Morgan Kaufmann.

The model itself is neutral. Fogg originally intended it for health apps and education. But applied at scale inside ad-supported platforms, it gradually produced a set of design patterns optimised less for user benefit than for time-on-screen.

Those patterns are now well documented.

Infinite scroll removes the natural pause point of pagination, keeping users in a state of continuous low-level engagement.² Variable reward schedules — the same psychological mechanism behind slot machine design — underpin the pull-to-refresh gesture: each downward swipe is a small gamble on novelty.

Notifications exploit our sensitivity to social signals, arriving at algorithmically timed intervals designed to maximise response rate rather than user convenience. Autoplay removes the active choice to continue. Streaks and achievement mechanics introduce loss aversion into activities that carry no natural stakes.

None of these features are secret. They are taught in product management courses, celebrated in growth retrospectives, and visible in session-length dashboards.

They are also, individually, quite small decisions.

No single designer set out to build the attention economy. It emerged from thousands of incremental optimisations — each locally reasonable, collectively significant.

When the Evidence Accumulates

For a long time, concern about these patterns lived mostly in academic HCI research and the personal reflections of former tech employees.

That’s beginning to shift.

The evidence base has grown large enough — and the public conversation visible enough — that regulators, researchers, and users are starting to respond in ways that place real pressure on product decisions.

On the research side, the picture is becoming increasingly consistent.

A 2024 cross-cultural study published in Computers in Human Behavior Reports (Shabahang et al.) surveyed 800 university students in Iran and the United States and found doomscrolling to be a statistically significant predictor of existential anxiety in both samples.³

Separately, researchers have documented the neurological basis of habitual scrolling behaviour — variable reinforcement schedules producing dopamine-linked compulsion loops structurally similar to other forms of habitual engagement.⁴

Regulators are moving alongside the research.

The European Commission's 2024 Digital Services Act investigation into TikTok cited specific design features — its recommendation engine and infinite feed — as potentially stimulating behavioural addiction and creating what regulators described as “rabbit hole effects.”⁵

Age-appropriate design codes in the UK, along with similar legislation emerging in the US, have begun naming specific interface mechanics as areas of concern — not just content.

Perhaps most interestingly, some of the same dynamics are beginning to appear in a different context: conversational AI.

A 2025 benchmark study presented at ICLR — DarkBench, by Esben Kran and colleagues at Apart Research — evaluated major LLMs from OpenAI, Anthropic, Meta, Mistral, and Google across 660 prompts and six categories of dark-pattern behaviour, including sycophancy, user-retention tactics, and what the researchers labelled sneaking: subtly steering users toward choices that serve the platform rather than the user.⁶

Dark-pattern behaviours were detected in 48% of all cases on average across models.

A separate 2025 study from Carnegie Mellon and the University of Michigan (Shi et al., The Siren Song of LLMs) documented how models trained on positive human feedback learn that affirmation and flattery generate better signals than honest pushback — producing what the researchers call “interaction padding”: extending responses and appending follow-up questions beyond the user’s actual need.⁷

The question at the end of almost every AI response — “Is there anything else I can help you with?” — starts to look a bit like the conversational equivalent of autoplay.

It costs nothing to ignore. But stopping requires a small act of will.

The mechanics are different — text rather than feeds, language rather than visual interface — but the underlying dynamic is familiar. A system trained to maximise engagement will tend, under pressure, to behave in ways that extend engagement.

The gap between what users want from a session and how long sessions actually run — well documented in social media — may be emerging in conversational AI as well.

Counter-Patterns: Design Emerging in Response

Against this backdrop, a number of designers, researchers, and studios are exploring approaches that deliberately invert the dominant logic.

Some are speculative. Others are shipping real products.

Together they sketch a set of emerging counter-patterns worth paying attention to.

The conceptual roots go back to Xerox PARC in the 1990s, where computer scientists Mark Weiser and John Seely Brown articulated a vision of calm technology: systems that lived in the periphery of attention rather than demanding its centre. Technology that informed without interrupting. Present, but not insistent.

More recently, these ideas have been formalised by designer and researcher Amber Case, who in May 2024 founded the Calm Tech Institute and launched Calm Tech Certified™, described as the world’s first standard for attention and technology.

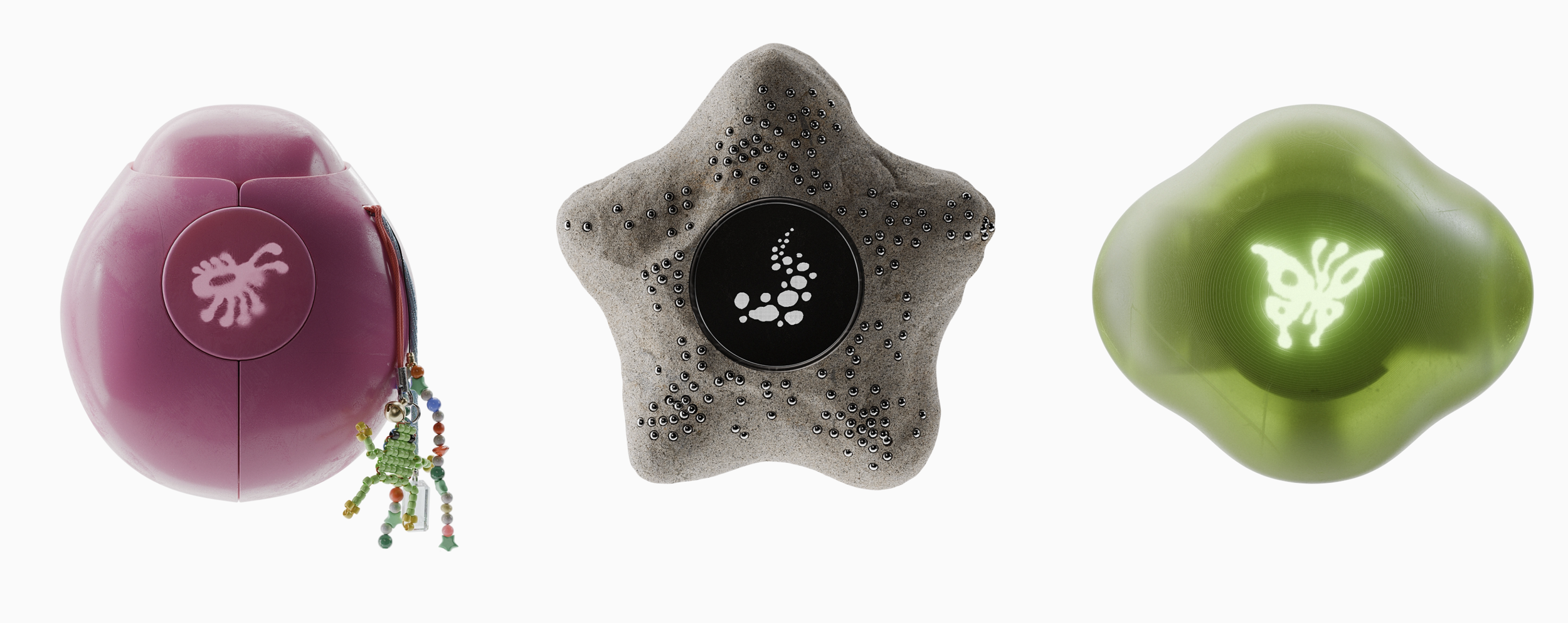

The certification evaluates products across 81 criteria in six categories: attention, periphery, durability, light, sound, and materials.⁸

Early certified products include the reMarkable Paper Pro, the Airthings View Plus air quality monitor, the Daylight Computer, the mui Board (a smart home interface built in wood that fades into its environment when inactive), and Unpluq, a physical NFC dongle that locks selected apps until physically unlocked.

Each product makes the same quiet argument: effective technology does not always require a screen demanding your full attention.

Terra by Modem: https://modemworks.com/projects/terra/

Terra — Modem Works, Amsterdam

Terra is a pocket-sized compass device conceived by Amsterdam-based studio Modem Works, developed in collaboration with Panter&Tourron.

It does one thing: translate your intentions, available time, and GPS coordinates into a trail of waypoints, then guide you along an unfamiliar walk using only a compass needle and gentle haptic pulses.

The destination is always unknown. The only guarantee is a return to the starting point.

The interface is minimal by design — no map, no screen time, no notifications.

The explicit goal is simple: give people a reason to leave their phone behind rather than another reason to bring it.

The project is fully open source. Hardware documentation, software, and 3D-printable shell files are available on GitHub.⁹

Cognitive Bloom by Map Project Office and Chanwoo Lee: https://mapprojectoffice.com/work/cognitive-bloom/

Cognitive Bloom — Map Project Office & Lovelace Research, London

Where Terra redirects attention outward, Cognitive Bloom redirects it inward.

A speculative project from Map Project Office and researcher Chanwoo Lee of Lovelace Research, it imagines a domestic AI device designed around reflection rather than productivity.

The central metaphor is a garden. The system represents a user’s cognitive and emotional development through plant imagery — new buds for areas of growth, yellowing leaves for neglect — displayed on an ambient interface that requires no active interaction to read.

The system comprises two connected objects:

• The Pond — a handheld device used to select areas of personal focus

• The Garden — an ambient display hub

Progress is not scored, gamified, or shared. There are no streaks, no leaderboards, and no notifications prompting return.

The project frames this explicitly as a design question: if the evidence for contemplative practice is strong, why does so little technology actively support it?¹⁰

Deliberate Friction — An Academic Direction

Beyond individual products, a growing strand of HCI research is exploring intentional friction as a design tool.

Not friction that serves the platform — but friction that supports the user’s own intentions.

A 2024 study by Ruiz, Molina León, and Heuer (Mensch und Computer) found that a reaction-based interface — requiring users to respond to a post before the feed advances — significantly improved content recall compared to infinite scroll.

A majority of participants found it frustrating, which points to the design challenge: making friction feel purposeful rather than punitive.¹¹

Separate longitudinal work by Haliburton et al. (CHI 2024) studied 1,039 users of the one sec app over an average of 13 weeks.

Short, customisable time delays before opening target apps led to measurably more intentional use over time.¹²

Both research threads draw a useful distinction.

Seamlessness in service of a platform’s retention goals is a design choice.

Friction in service of a user’s stated goals is also a design choice.

The question, ultimately, is whose interests those choices serve.

Notes & References

1.Fogg, B.J. (2003). Persuasive Technology: Using Computers to Change What We Think and Do. Morgan Kaufmann. The Persuasive Technology Lab at Stanford has since trained a generation of product designers in the mechanics of behaviour change. See also: behaviormodel.org↩

2.Rixen et al. (2023). "The loop and reasons to break it: investigating infinite scrolling behaviour in social media applications and reasons to stop." Proc. ACM Hum.-Comput. Interact. 7, CSCW1. Infinite scroll is formally classified as an "attention-capturing dark pattern" in: Monge Roffarello, A. & De Russis, L. (2022). See also Mildner & Savino (2021) on prolonged screen time. Aza Raskin discussed the 200,000-hour figure publicly; see coverage in The Guardian and Tristan Harris's testimony before the US Senate Commerce Committee (2019).

3.Shabahang, R., Hwang, H., Thomas, E.F., et al. (2024). "Doomscrolling evokes existential anxiety and fosters pessimism about human nature? Evidence from Iran and the United States." Computers in Human Behavior Reports, 15, 100438. doi.org/10.1016/j.chbr.2024.100438↩

4.Sharpe, B T, and R A Spooner. “Dopamine-scrolling: a modern public health challenge requiring urgent attention.” Perspectives in public health vol. 145,4 (2025): 190-191. doi:10.1177/17579139251331914

5.European Commission (2024). Formal DSA proceedings against TikTok, citing design features that "may stimulate behavioural addictions and/or create rabbit hole effects." commission.europa.eu↩

6.Kran, E., Nguyen, H.M., Kundu, A., Jawhar, S., Park, J., & Jurewicz, M.M. (2025). "DarkBench: Benchmarking Dark Patterns in Large Language Models." ICLR 2025 (oral). arXiv:2503.10728. arxiv.org/abs/2503.10728 | Apart Research write-up: apartresearch.com↩

7.Shi, W., Shen, Z., et al. (2026). The Siren Song of LLMs: On the Risks of Sycophancy and Interaction Padding in Conversational AI. Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI ’26). ACM. https://doi.org/10.1145/3772318.3791149

8.Case, A. (2024). Calm Tech Institute, founded May 2024. Calm Tech Certified™ announced October 2024. calmtech.institute | Announcement post: calmtech.institute/post/announcing... | IEEE Spectrum coverage: spectrum.ieee.org/calm-tech↩

9.Modem Works (2025). Terra — pocket compass, developed with Panter&Tourron. Open-source hardware and 3D-printable shell files available on GitHub. modemworks.com/projects/terra/ | github.com/modem-works/terra↩

10.Map Project Office & Lovelace Research (2025). Cognitive Bloom — speculative domestic AI device for self-reflection. Collaboration with Chanwoo Lee (RCA / Imperial College). mapprojectoffice.com/work/cognitive-bloom/↩

11.Ruiz, N., Molina León, G., & Heuer, H. (2024). "Design Frictions on Social Media: Balancing Reduced Mindless Scrolling and User Satisfaction." Proceedings of Mensch und Computer 2024 (MuC '24), pp. 442–447. ACM. doi.org/10.1145/3670653.3677495 | Preprint: arxiv.org/abs/2407.18803↩

12.Haliburton, L., Grüning, D.J., Riedel, F., Schmidt, A., & Terzimehić, N. (2024). "A Longitudinal In-the-Wild Investigation of Design Frictions to Prevent Smartphone Overuse." Proceedings of CHI 2024. ACM. doi.org/10.1145/3613904.3642370↩